Page 337 - Invited Paper Session (IPS) - Volume 2

P. 337

IPS273 Tomoki Tokuda et al.

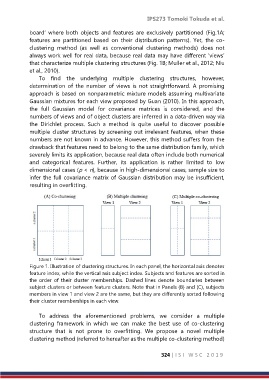

board’ where both objects and features are exclusively partitioned (Fig.1A;

features are partitioned based on their distribution patterns). Yet, the co-

clustering method (as well as conventional clustering methods) does not

always work well for real data, because real data may have different ‘views’

that characterize multiple clustering structures (Fig. 1B; Muller et al., 2012; Niu

et al., 2010).

To find the underlying multiple clustering structures, however,

determination of the number of views is not straightforward. A promising

approach is based on nonparametric mixture models assuming multivariate

Gaussian mixtures for each view proposed by Guan (2010). In this approach,

the full Gaussian model for covariance matrices is considered, and the

numbers of views and of object clusters are inferred in a data-driven way via

the Dirichlet process. Such a method is quite useful to discover possible

multiple cluster structures by screening out irrelevant features, when these

numbers are not known in advance. However, this method suffers from the

drawback that features need to belong to the same distribution family, which

severely limits its application, because real data often include both numerical

and categorical features. Further, its application is rather limited to low

dimensional cases (p < n), because in high-dimensional cases, sample size to

infer the full covariance matrix of Gaussian distribution may be insufficient,

resulting in overfitting.

Figure 1. Illustration of clustering structures. In each panel, the horizontal axis denotes

feature index, while the vertical axis subject index. Subjects and features are sorted in

the order of their cluster memberships. Dashed lines denote boundaries between

subject clusters or between feature clusters. Note that in Panels (B) and (C), subjects

members in view 1 and view 2 are the same, but they are differently sorted following

their cluster memberships in each view.

To address the aforementioned problems, we consider a multiple

clustering framework in which we can make the best use of co-clustering

structure that is not prone to overfitting. We propose a novel multiple

clustering method (referred to hereafter as the multiple co-clustering method)

324 | I S I W S C 2 0 1 9